One Engineer. A Full AI Engineering Team.

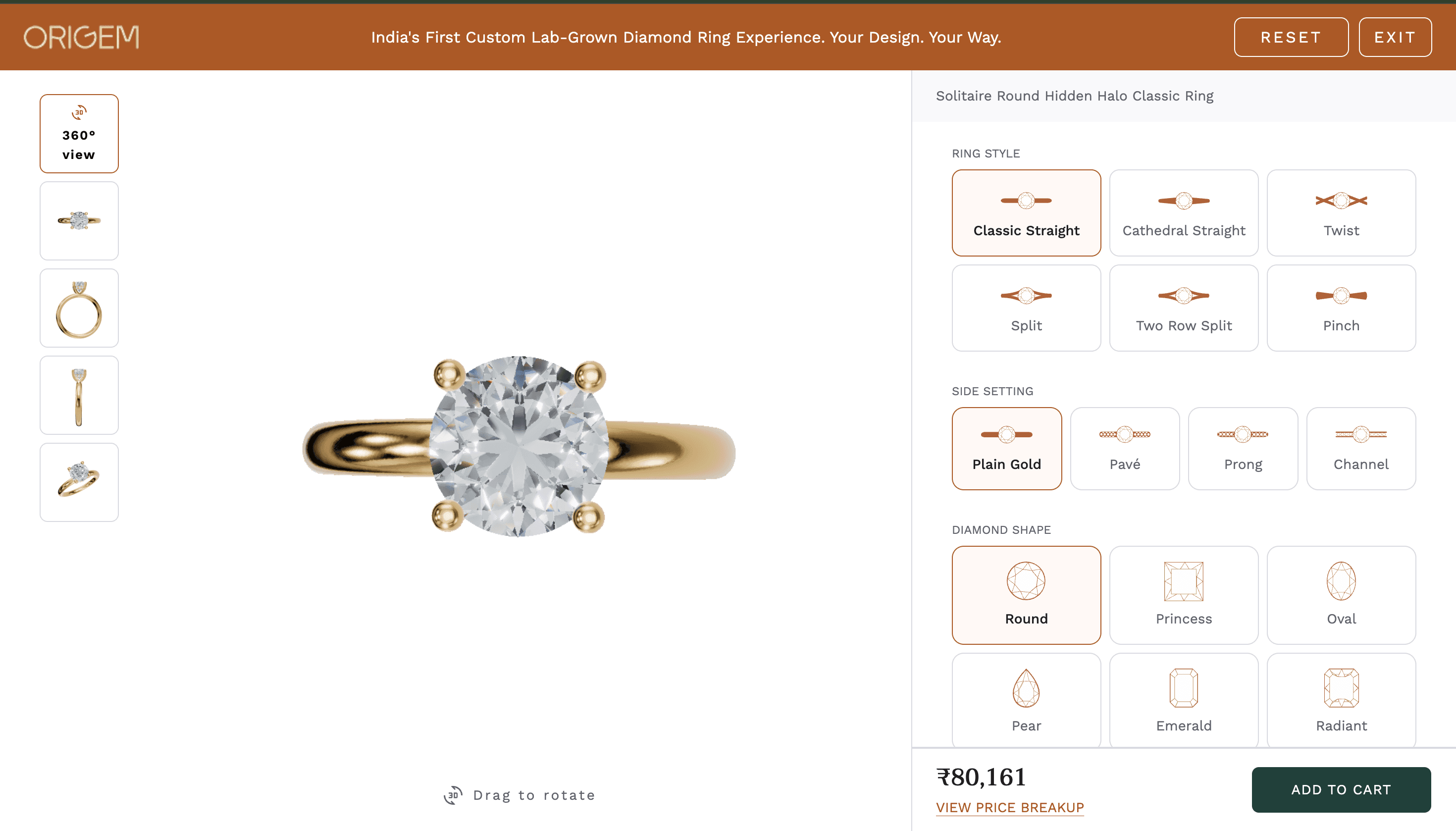

139,968 ring variants. Physically accurate chromatic light dispersion. A GLSL shader running 5 ray bounces per diamond facet at 60fps. A 3.8GB model library compressed and served from S3. I built this alone — except I was never really alone.

I had a full engineering team. It just happened to be made entirely of AI.

What I Was Actually Building

Not a spinning product image. A live 3D scene where the diamond refracts light like a real stone — the rainbow fire that makes a customer stop scrolling and think "that looks real."

The configurator covers every combination of ring style, side setting, diamond shape, center diamond size, crown setting, metal color, metal karat, and ring size. 139,968 variants, all connected to a live pricing feed, all renderable in the browser. The largest single model — a 3.0ct Hidden Halo Princess — weighs 51MB. The smallest is 56KB. The total library before cleanup was 3.8GB.

At several points I thought: this is too ambitious. Instead of shrinking the scope, I changed how I built.

The Pipeline: Four Players, One Leader

No single AI could build this. So I stopped thinking of AI as a tool and started treating it as a team I needed to lead.

Claude Code lived inside the codebase, understood every file and architectural decision, and executed the deep implementation work. Manus handled structured research: physics, optics benchmarks, rendering approaches. ChatGPT ran visual critique and realism scoring on recorded renders. I handled direction, judgment, synthesis, and taste.

They didn't build this. I led them to build it.

Claude Code: The Engineer I Directed

Claude understood my codebase the way a senior engineer who's been on a project for months understands it. That persistent context — every component, every architectural decision — is what made it useful beyond code generation.

But I never just said "build me a shader." I defined physical requirements, performance limits, visual targets, the trade-offs I was willing to make. Then I directed the implementation. We debated approaches. I pushed back on some, insisted on others.

The diamond shader isn't JavaScript. It's GLSL — raw code running directly on the GPU, executing across thousands of cores simultaneously, doing physics math for every pixel of every diamond facet at 60fps. Writing that kind of code requires knowing both the physics of light and how to express that physics in GPU instructions. Manus gave me the physics foundation. I synthesized it into a precise brief. Claude translated it into working GPU code.

When reflections weren't tracking correctly as the ring rotated — subtle, visually wrong in a way I could feel before I could explain — I studied it, brought the observation to Claude, pushed for root cause rather than a patch. We found a world-space transform bug deep in the shader. Fixed properly, not band-aided.

Every technical win followed the same pattern: I detected the problem, set the constraints, directed the investigation, chose the approach. Claude executed with depth and speed I couldn't match alone. The judgment was always mine.

Performance Engineering: Real Numbers

3D ring models are heavy. A typical head-and-shank combination runs 1.6–2.1MB. The 3.0ct Hidden Halo Princess is 51MB. Load those naively and most mobile users bounce before the scene renders.

I didn't ask Claude to "make it smaller." I set hard constraints: maintain visual quality, reduce file size, lower CloudFront and Vercel bandwidth costs, work across every device tier.

What came out was a proper compression pipeline — geometry optimization that strips redundant vertex data without changing what you see, texture compression and resizing based on where each element appears in the scene.

The more interesting solution was the cubemap resolution strategy. The diamond shader uses a pre-baked cubemap to sample the environment during ray bouncing. Each diamond runs 5 bounces — hardcoded — and the cubemap is sampled at each bounce. On desktop, we use 512×512. On mobile for diamonds at or above 3.0ct, we cap at 384×384. On mobile we also switch from FloatType to UnsignedByteType — 8-bit only, safe across all mobile GPUs. Accent and side diamonds always render at 256×256.

The 3.0ct mobile cap exists because we hit Chrome crashes without it. Not a theoretical limit — a real one we found in testing.

The normal capture cache saves 300–500ms per diamond by pre-rendering all 6 cubemap faces and reusing them on subsequent loads instead of re-running the GPU capture. On mobile, we block with gl.finish() to ensure the capture completes before sampling. On desktop we use the non-blocking gl.flush().

Bloom is fully disabled on iOS. On mobile generally, bloom levels drop from 2 to 1 and the luminance threshold rises from 1.0 to 1.2. These aren't aesthetic choices — they're the difference between 40fps and dropping below 30.

I made sure Claude understood both goals simultaneously: visual quality and business viability. That's how a good engineer thinks. I made sure my AI engineer thought that way too.

Manus: The Research That Changed the Architecture

Before Manus, I was pushing Three.js standard material parameters — IOR values, metalness, roughness, transparency. I spent weeks on it. It looked fine. It didn't look real.

There's a ceiling on what standard materials can produce for something as optically complex as a diamond. I was hitting that ceiling without knowing there was a ceiling.

So I asked Manus a different question: what do the best diamond rendering implementations actually do?

What came back changed the entire project. Pre-baking the diamond's geometry normals into a cubemap, then sampling that cubemap during the ray bounce loop at runtime. Instead of expensive real-time geometry intersection every frame, you capture the surface information once. Fast, accurate, physically grounded. Manus also surfaced the actual physical values — real refractive index, dispersion characteristics across the color spectrum, how environment maps need different orientation for gems versus metals, what tone mapping preserves fire without clipping brightness.

This wasn't incremental improvement. It was a fundamentally different approach that only became visible through proper research. Without Manus, I would have kept pushing standard material parameters and wondering why the diamond never looked quite right.

ChatGPT: Visual QA After I Stopped Seeing Clearly

After hours staring at the same render, objectivity disappears. You start seeing what you want to see.

After every significant iteration I recorded a short clip of the diamond rotating, fed it to ChatGPT, and asked one question: rate this for realism out of 100%, tell me specifically what's missing.

The feedback was direct every time. Color separation felt weak — I directed wider dispersion between channels in the shader. The top facet was too dark compared to real diamonds — I pushed on how light bounce weights were applied. A slight cloudiness pointed to absorption behavior that needed adjusting. The most consequential note: reflections looked frozen during rotation, as if the environment wasn't following the ring. That led back to Claude, a root cause investigation, and finding the world-space transform bug in the GPU code. One pass flagged slight overexposure — tone mapping dialed back.

First working version: around 60% realism. After dozens of loops: 90%+.

Not because AI improved it automatically. Because I kept running the loop and refused to accept good enough.

What This Replaced

| Role | How I Covered It | |—-|—-| | Senior Graphics Engineer | Me directing Claude Code | | WebGL Specialist | Me guiding Claude through pipeline decisions | | GLSL Shader Developer | Manus physics research + Claude executing, led by me | | Rendering Researcher | Me directing Manus | | Performance Engineer | Me setting constraints, Claude executing | | Visual QA / Art Director | Me running review loops with ChatGPT | | Product Engineer | Me orchestrating Claude on variant logic, pricing, engraving |

Seven roles. In a traditional setup: multiple salaries, recruiting cycles, onboarding, alignment meetings, iteration cycles measured in sprints.

In this pipeline: research happened in hours. Implementation followed the same day. Visual feedback needed no scheduling. Iteration cycles ran in hours. Zero coordination overhead — because I was the coordination layer, and I cared too much to let anything get lost.

What AI Couldn't Do

Let me be specific about where the human work was irreplaceable.

AI didn't hold the product vision across months of sessions. It didn't decide when the diamond finally looked real enough. It couldn't feel the subtle wrongness of a reflection that was 5% off. It didn't know when a technically correct answer was still the wrong choice for this specific product.

The hardest work was translating "the fire looks flat" into a precise shader change. Translating Manus research into a brief Claude could act on. Knowing when to push back on an AI answer that felt wrong. Holding the standard high when close enough was tempting. Re-injecting vision at the start of every session because AI doesn't carry long-term intent automatically.

AI provides depth. Direction is the human's job. Without direction, depth is expensive noise.

What Actually Changed

The breakthrough wasn't technical. It was structural.

I stopped asking "can AI build this?" and started asking "how do I structure this so AI can execute it correctly?"

The conductor doesn't play any instrument better than the musicians. But without a conductor you don't get a worse performance — you get noise. That's what turns individual capability into something coherent.

This isn't automation. It's orchestration. The difference is whether a human with real taste and real standards is leading it.

I'm not a trained graphics engineer. I've never shipped a AAA game. But I led the creation of a diamond rendering system — real GLSL code built from physics research, device-specific cubemap resolution, a compression pipeline serving 139,968 variants from S3 — that's competitive with the best jewelry configurators on the web.

One person. One vision. A full AI engineering team.