Our Job Isn't to Write Code Anymore. It's to Think Clearly.

I've been using Claude Code in my development for a while now. The capabilities are genuinely impressive. It reads entire codebases, writes files across multiple directories, runs terminal commands, connects to Figma for design specs, spawns parallel agents, enforces coding standards through automated hooks. It can take a feature described in plain English and build it end to end.

That's where most of us are right now. We have these incredibly capable tools, and we're using them every day.

But over the past several months, I've noticed something that I keep coming back to.

When AI finishes a task, and it usually does finish, I'd look at the output and realize I didn't fully understand what it did. The code worked, mostly. But the approach wasn't mine. The file structure wasn't what I would've chosen. The naming, the patterns, the way things were wired together, those were AI's decisions, not mine.

And when something broke, I found myself reading the code like it was someone else's codebase. Because, in a way, it was.

This isn't a complaint about AI. The code was often fine. Sometimes better than what I would've written myself. But there was this gap between what got built and what I actually understood. That gap kept bothering me.

Why this keeps happening

I started wondering about it. Why does this gap exist?

And I think it comes down to something simple. When we go straight to "build this feature," we're not just handing over the typing. We're handing over the thinking. We describe the what. "Add a testimonial section with a carousel and star ratings." And AI fills in everything else. The how, the structure, the approach, the edge cases. It fills gaps we didn't even know were there.

That's fine when it gets things right. But when it doesn't, and it won't always, we're stuck debugging decisions we never made. We're reverse-engineering a thought process that wasn't ours to begin with.

I think most of us have felt some version of this. AI completes 60-80% of a task, and it looks good. But that remaining 20-30% turns into a longer effort than expected, because we're not fixing our own work. We're fixing work we don't fully understand.

What I think is changing

Here's something I've been sitting with for a while now. Writing code was never really the hard part of development. The hard part was always thinking clearly about what to build and how to build it. We just didn't notice because writing the code took so long that it felt like the main work.

Now AI handles the writing. And what's left is the thinking.

I don't think the developer's role is going away. I think it's shifting. From executing to directing. From being the person who writes the code to being the person who knows what needs to be built, why it needs to be built, and how it should come together.

The way I see it, the job now looks something like this. Define the problem. Find the solution. Chart the approach. And then let AI execute within those boundaries.

That doesn't mean you stop understanding the code. In fact, it's the opposite. When something breaks, and it will, you need to be able to read what was built and figure out what went wrong. A developer who directs but can't understand the output can't course-correct. The ability to execute is still important. You're just choosing to direct instead, because that's where your time creates the most value.

Trying something different

So I tried changing the order of things.

Before asking AI to build anything, I started getting really clear on what I wanted. Not just the feature, but the approach, the files that should be created, the patterns that should be followed, how the CSS should be structured, where the JavaScript should live.

I started treating clarity as a step in the workflow. Not something I assumed AI would figure out on its own.

And it changed how the output felt.

When the plan was clear before execution started, the code that came back made sense to me. I could read it and know why each file existed. I could explain the approach to someone else. I could fix things without reverse-engineering AI's thought process, because AI hadn't been thinking for itself. It had been following a plan I'd already thought through.

Not 100% perfect every time. But the gap between what I wanted and what I got shrank noticeably. Instead of that 60-80% completion with a painful last mile, I was getting closer to 90%, and the remaining 10% was straightforward because I understood the foundation underneath it.

AI-Led, not AI-Enabled

There's a way to think about this difference. "AI-Led" versus "AI-Enabled."

AI-Enabled is how most of us work with AI right now. We have access to the tool, we use it, and we react to what it produces. It's powerful, but it's reactive. We're along for the ride, cleaning up after.

AI-Led is a different relationship with the same tool. We think first. We clarify what needs to be done. We plan how it should be done. And only then do we let AI execute, within boundaries we've already set.

Same AI. Same capabilities. Just a different order of operations.

What that order looks like within a task

Here's what normally happens when we prompt AI. We describe what we want, and it starts writing code immediately. No questions, no discussion, no plan. Straight to execution. That's the default behavior, and it's why we end up with output we don't fully understand.

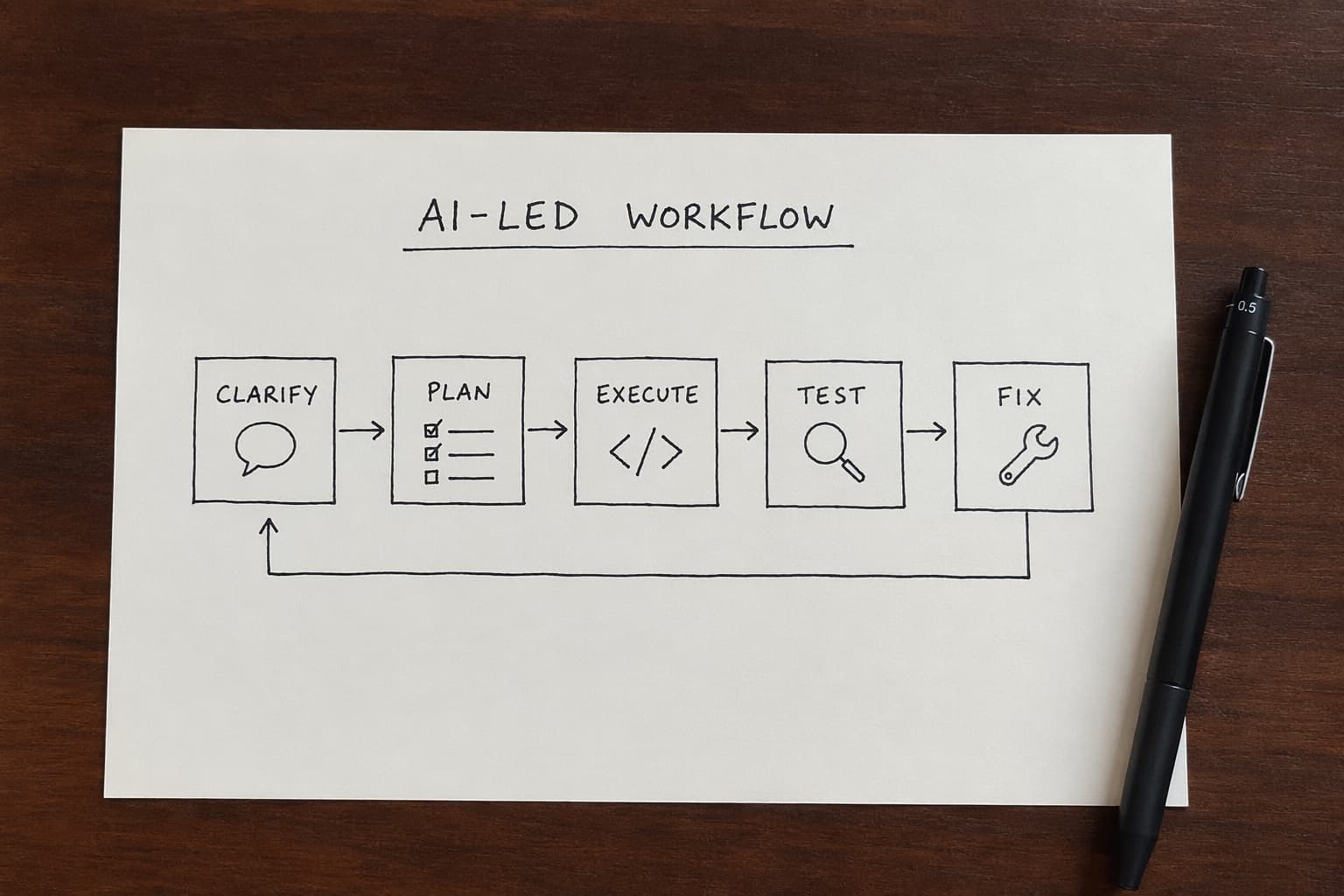

So I built a workflow that changes that order. Clarify, plan, execute, test, fix.

/clarify

AI doesn't write a single line of code here. Instead, it brainstorms with me. It asks questions about things I haven't thought through. If something is vague, it pushes back. We go back and forth until what needs to be done is genuinely clear. The goals, the approach, the logic. Nothing vague survives this step.

/plan

Based on that clarity, AI reads the existing codebase to understand what's already there. What patterns exist, what files are involved, what conventions the project follows. From there it creates a detailed plan: which files to create, which to modify, what each file should do, a TODO list for execution. Everything is mapped out before anything gets built.

This is where most of my time goes. I iterate on clarify and plan, sometimes multiple rounds, until I'm confident that both the what and the how are solid. Some days I spend more time in these two steps than the rest combined. And that's the point. The thinking happens here.

/execute

Once I have that confidence, execute takes over. AI goes through the plan TODO by TODO, spawning agents for each task. It doesn't deviate. It doesn't make its own creative decisions. It builds what was planned, the way it was planned.

/test

Test validates the output against the goals we defined back in clarify. Did we achieve what we set out to do? Are there bugs? If everything checks out, we're done.

/fix

If not, fix doesn't just patch things. It does a proper root cause analysis. What's missing, why is it missing, where exactly did it break. Then it repairs it with that understanding.

That loop works well for individual tasks. But when I started applying this to larger projects, I realized something else was needed.

What that order looks like across a project

A single clarify-plan-execute cycle works great for a feature or a component. But a full project has dozens of those. And if you try to clarify and plan an entire project in one go, you end up with something too big for AI to execute well and too abstract for you to verify meaningfully.

So I started breaking projects into phases.

Say I want to build a Shopify app that helps merchants track events on GA4 and Meta. That's not one task. It's several distinct pieces of work, and each one has its own scope, its own dependencies, and its own definition of done.

So the phases might look like:

- App setup

- GA4, GTM, and Meta onboarding

- Standard event creation through Shopify's App Pixel

- Custom event creation through AI

Each of those is a meaningful chunk of work. But they're still too big to hand directly to AI. So each phase breaks down further into sub-phases.

Phase 1 might become:

- 1.1 Scaffold the Shopify app

- 1.2 Set up authentication and permissions

- 1.3 Configure the development environment

Phase 2 might become:

- 2.1 GA4 OAuth flow

- 2.2 GTM container setup

- 2.3 Meta API integration

And so on. Each sub-phase is small enough that clarify, plan, execute, test, fix can run within it cleanly. Each one has a clear goal and a clear end state.

What this gives me is a structure I can work through sequentially. Finish 1.1, verify it works, move to 1.2. Finish Phase 1, assess whether the foundation supports Phase 2, then move forward. If something I learned during Phase 2 changes how I think about Phase 3, I can adjust the plan for Phase 3 before I get there.

The inner loop, clarify through fix, handles the work within each sub-phase. The outer loop, the phase structure, handles the project as a whole.

The part that doesn't change

There's something I want to be honest about. This approach doesn't eliminate the gap entirely. I still find that AI gets about 80% of things right. That remaining 20% still requires me to read the code, understand what happened, and fix what needs fixing.

I don't think that 20% is going away anytime soon. And I actually think that's fine.

Because with a phased approach, that 20% is contained. It happens within a sub-phase I understand, on a foundation I planned, in a codebase I can navigate. Fixing 20% of Phase 2.1 is a very different experience from fixing 20% of an entire project you didn't fully think through.

The effort doesn't disappear. But it becomes manageable, because you're fixing work you understand rather than work you're seeing for the first time.

Where the time goes now

If I look at where I spend most of my time these days, it's not in execution. It's in defining phases and writing them down clearly. Figuring out what each phase needs to achieve. Thinking through what success looks like at each level before any code gets written.

The phases and the prompts that describe them, that's become my primary output. Not code. The code comes from AI. My job is to create the structure that makes that code coherent.

I don't want to overstate this. It's not a perfect system. Some days the phasing is wrong and I have to restructure mid-project. Some sub-phases are harder to define clearly than others. And there are still moments where I need to just get in the code and figure things out myself.

But the general direction feels right to me. Invest in clarity. Break things into phases. Define what done looks like before execution starts. And let AI do what it's good at, within boundaries you've set.

We're not being replaced. What we do is changing. And I think the developers who'll get the most out of these tools are the ones who get comfortable with that shift. Not better at prompting. Better at thinking before the prompt.