Horses for Courses: Why Tool Selection Beats Tool Mastery

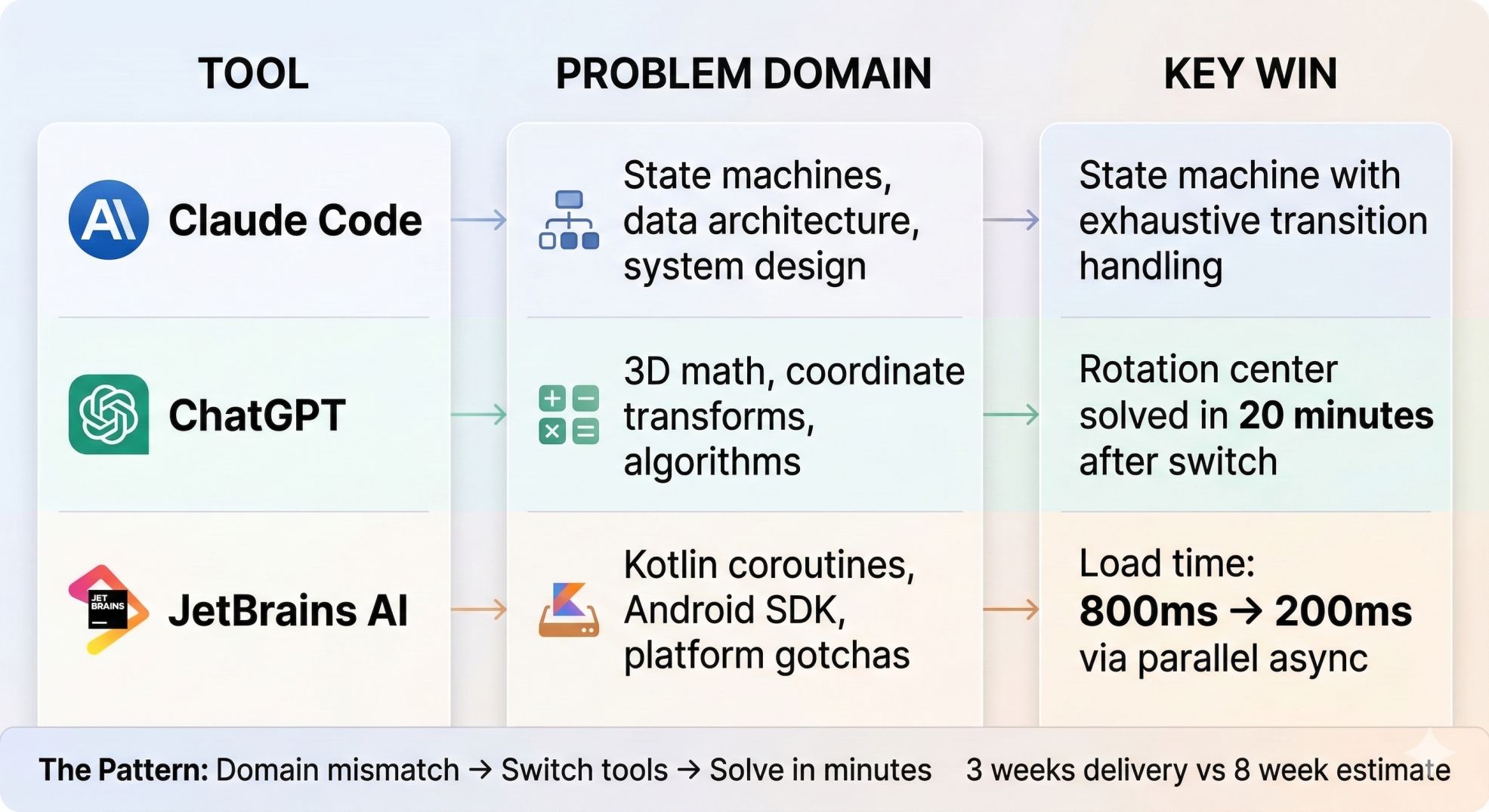

Building an Android app for The Sleep Company taught me that choosing the right AI tool matters more than mastering one. The project needed system architecture, Android optimization, and 3D mathematics. I used three specialized tools instead of one: Claude Code for architecture, JetBrains AI for Android patterns, ChatGPT for math.

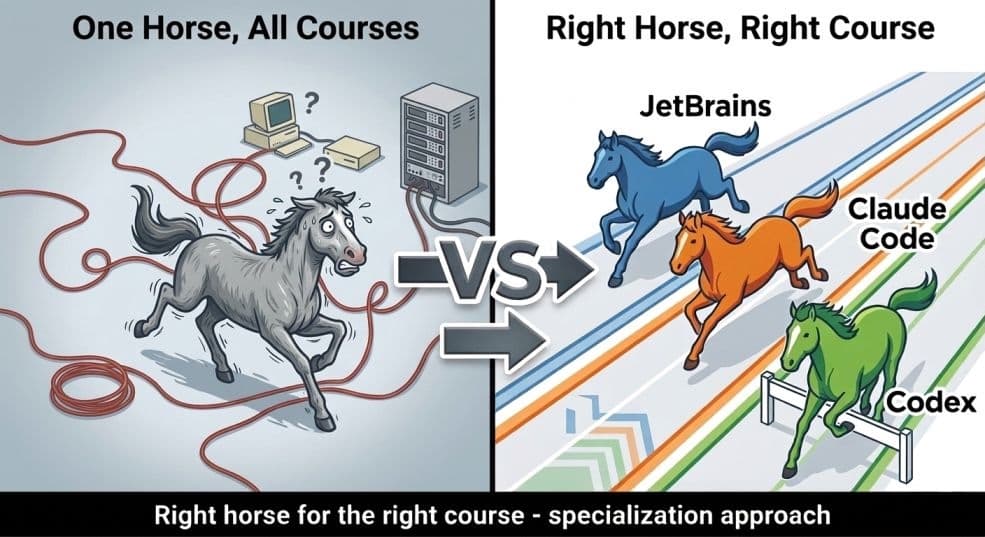

The principle is simple: different tools excel at different tasks. British horse racing calls it "horses for courses" - a racehorse performs best on tracks suited to its strengths. A flat-track champion might lose on hills. The strategy isn't finding the universally "best" horse. It's matching horses to courses where they naturally excel.

AI tools work the same way. System design requires understanding component relationships. Framework optimization requires platform-specific knowledge. Mathematical problems require computational reasoning. These are different problem domains. Using specialized tools for each domain is faster than using one tool for everything.

This article explains the three tools we used and why each one fit specific tasks.

TL;DR

Finished in 3 weeks. Internal estimate was 8. Used three AI tools instead of one - Claude Code for system design, ChatGPT for 3D math, JetBrains AI for Android gotchas. The strategy isn't tool mastery. It's knowing which problem needs which mind.

The Default That Costs You

Most developers who use AI tools settle on one and use it for everything. Claude Code becomes the hammer. Every problem is a nail.

The issue isn't that these tools are bad. It's that they're differently good. Claude Code reasons about systems. ChatGPT works from mathematical first principles. JetBrains AI knows the Android SDK the way a platform expert does. Use any one of them outside their domain and you get answers that are technically plausible but subtly wrong - and debugging "subtly wrong" is expensive.

The pattern I kept hitting: describe a problem, get a confident-sounding answer, implement it, have it not work, repeat two or three times - then switch tools and solve it in minutes.

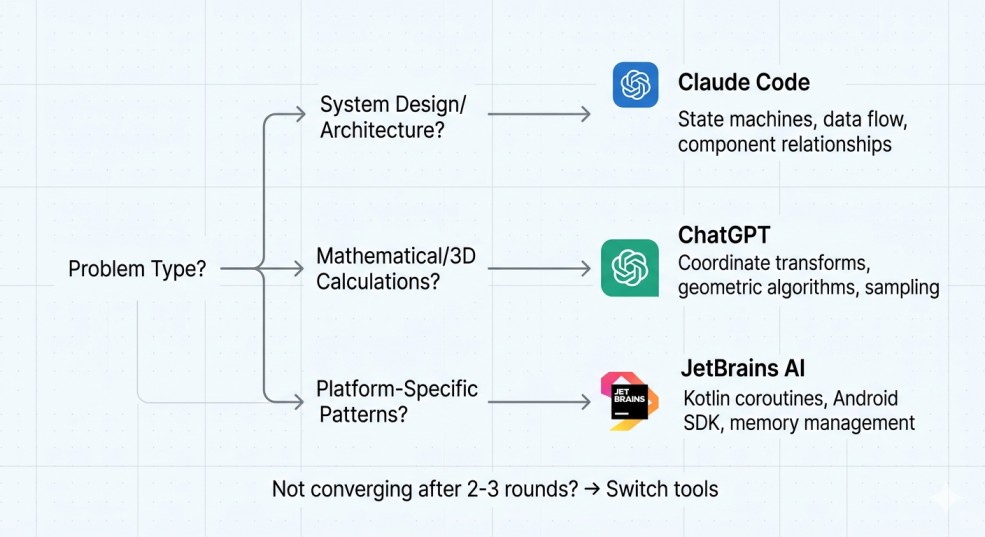

The tool map that emerged from those failures:

| Problem class | Tool | Why it wins |

|---|---|---|

| System architecture, state machines, data flow | Claude Code | Reasons about component relationships and design patterns |

| Kotlin idioms, Android SDK constraints, coroutine scoping | JetBrains AI | Framework-specific knowledge baked in |

| Geometric transforms, sampling algorithms, coordinate math | ChatGPT | Mathematical reasoning from first principles |

That's the thesis. The rest of this post is the evidence.

What Was Actually at Stake

TSC TV is a 3D product visualization platform for The Sleep Company's offline retail stores. A customer walks up to an Android TV, browses mattresses and sleep products as interactive 3D models, explodes them into parts, examines individual components. Behind it: a web CMS for product management, Firebase syncing content across the store network in real time.

Deployment target: 20 stores at launch, 50 by month 6, 200 within 12 months.

Technical constraints that made this hard:

- 13 products, each with 3D model files (GLBs) ranging 30-40 MB

- 60 FPS on mid-range Android TV hardware

- Store-specific product versioning - Store A can show v1 while Store B is already on v2

- Firestore syncing updates across the entire network in real time

Timeline: Single developer. Delivered in 3 weeks.

The Problem I Couldn't Solve Until I Switched

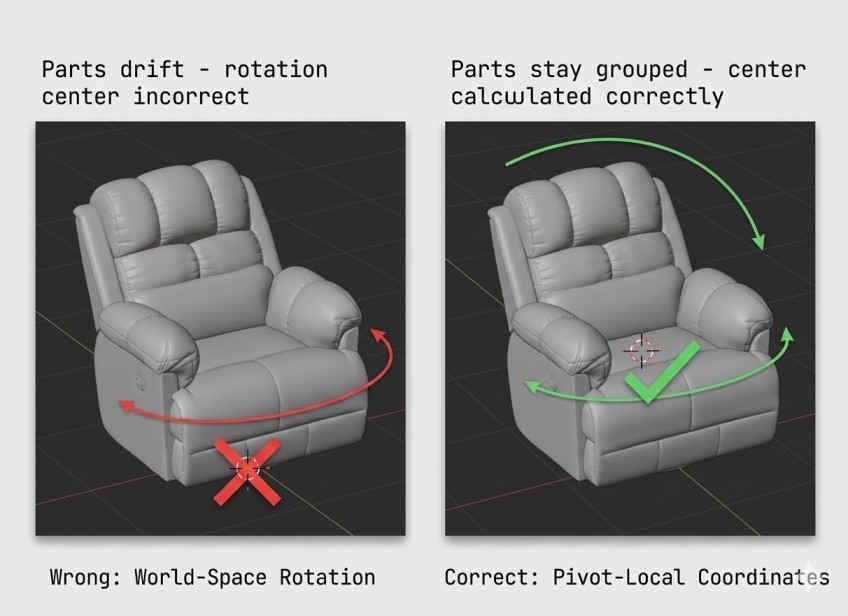

About a week in, I was stuck on the exploded view. The app needed all product parts to rotate around a shared center point as a unit. Parts were positioned separately in 3D space; the rotation anchor kept drifting.

I brought this to Claude Code. Described the problem: parts positioned independently, rotation off-center. It suggested calculating the center from part position averages. I implemented it. Didn't work. Added diagnostic logs, shared the output. Got revised suggestions. Still broken after two more rounds.

Switched to ChatGPT with the exact same initial prompt.

Exchange 1: Center calculation from part positions - same suggestion as Claude Code. Exchange 2: I asked for diagnostic output for bounds and transforms. Exchange 3: It identified the issue. The problem wasn't the center calculation. It was a coordinate system mismatch - world-space positions needed to be converted to pivot-local coordinates before the rotation was applied.

val localOriginal = Position(

x = part.originalPosition.x - center.x,

y = part.originalPosition.y - center.y,

z = part.originalPosition.z - center.z

)

part.modelNode.position = localOriginal

part.originalPosition = localOriginal

Worked on first implementation. Total time from switching tools to working code: under 20 minutes.

The distinction: Claude Code was treating rotation center as a geometry averaging problem. ChatGPT immediately framed it as a coordinate system transformation - which is what it actually is. It thinks in the language of 3D math. Claude Code thinks in the language of software architecture. Same problem description. Completely different reasoning.

That's when the tool switching became intentional.

Claude Code: Architecture Isn't Just Code

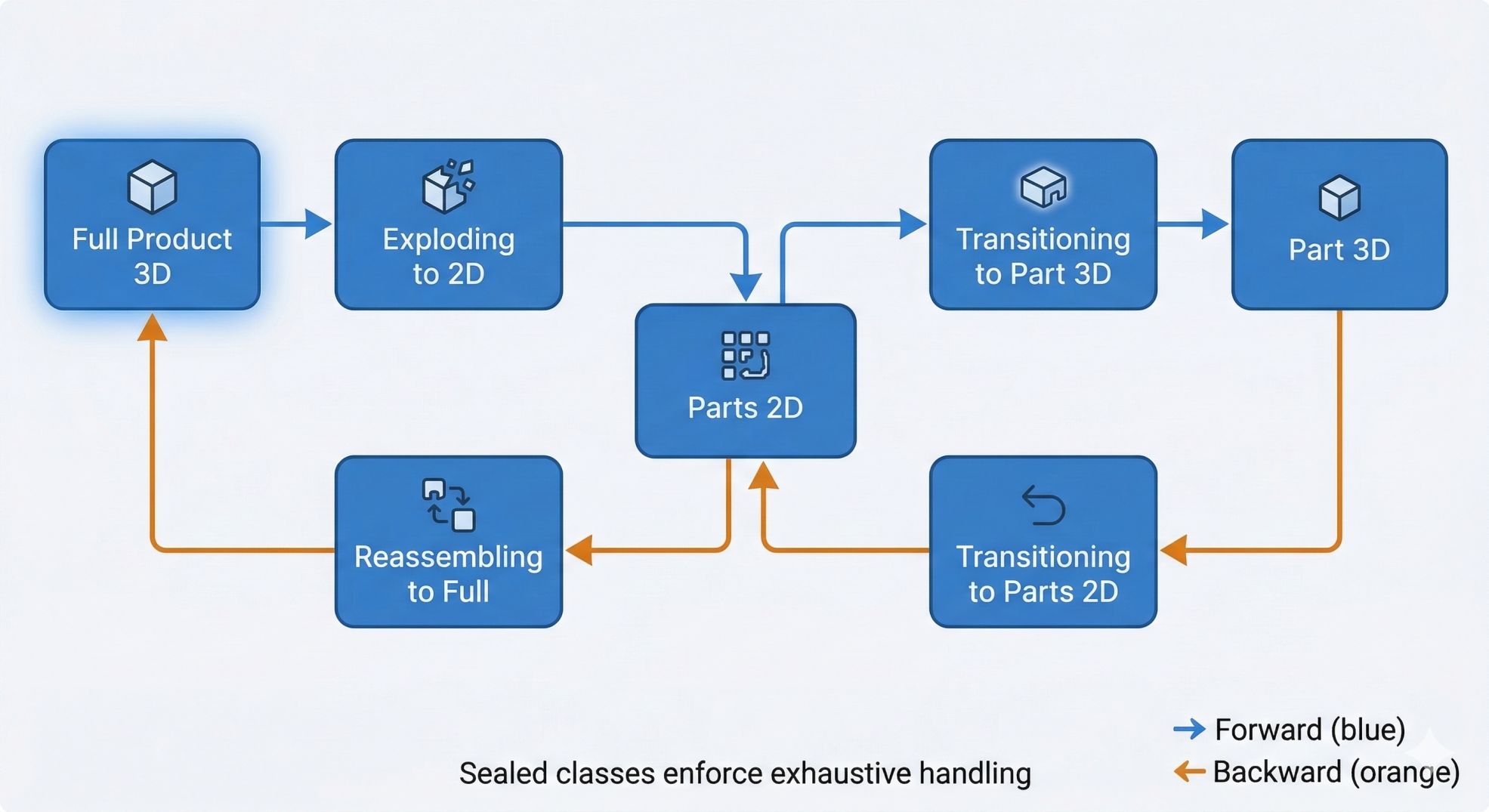

The state machine is the clearest example of Claude Code's domain.

The app has six view states: full 3D product, exploding to parts, 2D parts view, reassembling, transitioning to individual part 3D, individual part 3D. Earlier in the project, I'd tried designing this with ChatGPT. It produced code for individual states - each one worked. But it completely missed the architecture: no sealed classes for type safety, no exhaustive transition handling, no model of which state changes are valid. It was solving six separate problems, not one system.

Switched to Claude Code. First response:

sealed class AppState {

data object FullProduct3D : AppState()

data object ExplodingTo2D : AppState()

data object Parts2D : AppState()

data object ReassemblingToFull : AppState()

data class TransitioningToPart3D(val partName: String) : AppState()

data class Part3D(val partName: String) : AppState()

data class TransitioningToParts2D(val fromPart: String) : AppState()

}

private fun transitionToState(newState: AppState) {

currentState = newState

when (newState) {

is AppState.FullProduct3D -> enterFullProductState()

is AppState.ExplodingTo2D -> enterExplodingState()

is AppState.Part3D -> enterPart3DState(newState.partName)

// all transitions handled exhaustively - compiler enforces it

}

}

Sealed classes mean the compiler rejects any unhandled transition. Backward transitions (exploded - reassemble - full model) were modeled explicitly. The state machine became generic across all product types without any structural changes.

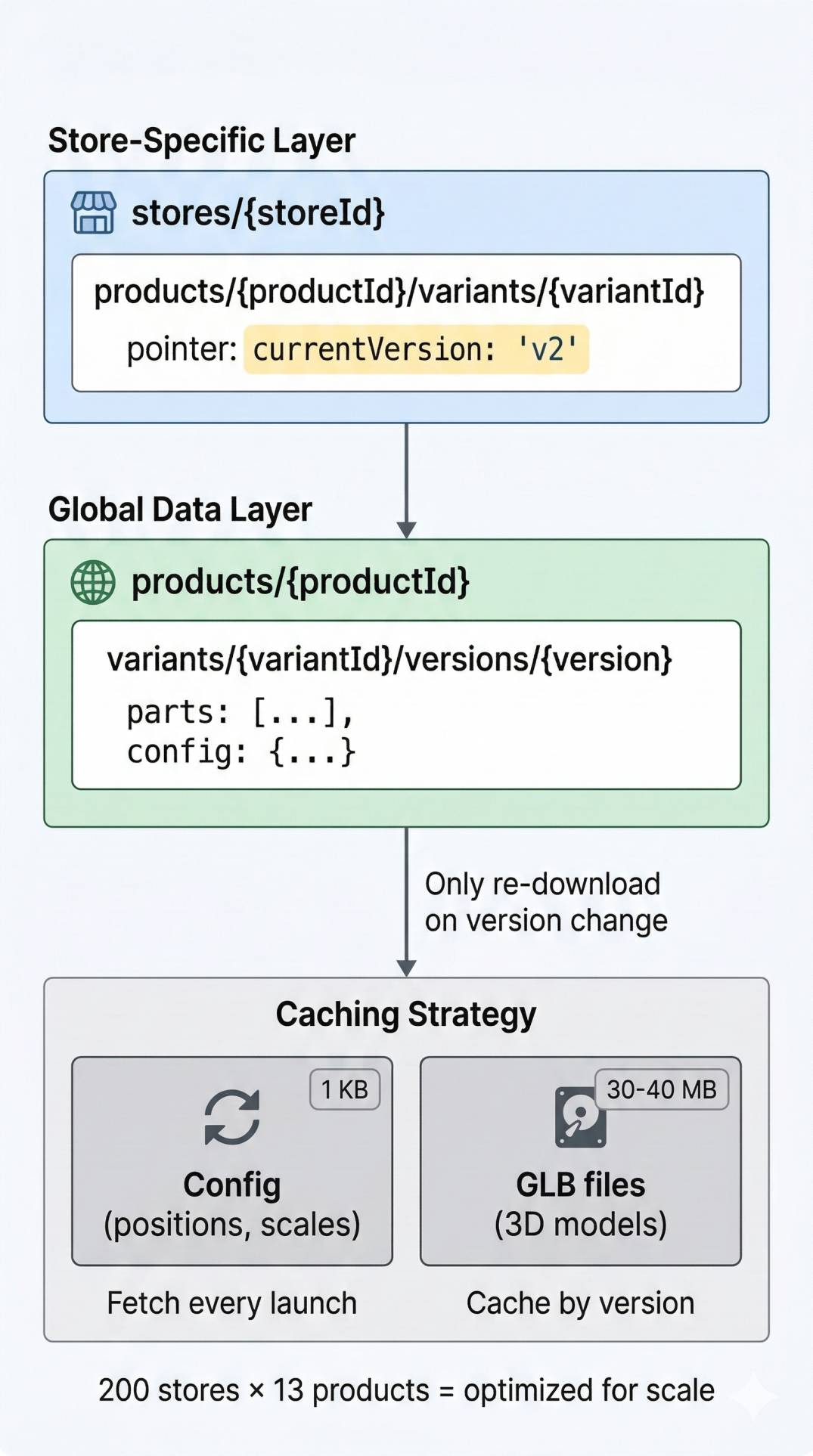

Same pattern for the Firestore schema. The problem: 13 products with 30-40 MB GLBs each, 200 stores on the roadmap. Re-downloading models on every launch wasn't an option. But store-specific versioning (Store A on v1, Store B on v2) meant you couldn't just cache globally either.

The data model Claude Code designed: a three-level hierarchy separating store-specific pointers from globally-shared model data:

// stores/{storeId}/products/{productId}/variants/{variantId}

// currentVersion: "v2" ← store-specific pointer

// products/{productId}/variants/{variantId}/versions/{version}

// parts: [...] ← shared global data

The caching strategy that follows naturally:

// Config (positions, scales) - fetch fresh every launch (1 KB, negligible)

// GLB files (3D models) - cache by version, only re-download on version change

Result: app launches with cached models instantly, only downloads when the CMS pushes a new version. With 30-40 MB files and a 200-store rollout, that's not a nice-to-have.

JetBrains AI: What the Others Got Wrong

JetBrains AI wasn't a deliberate choice - it was already in the IDE. What made it useful was that it caught things the other tools had written incorrectly.

During optimization, loading 7 chair parts sequentially was blocking the UI for 400-800ms. Claude Code suggested sequential coroutines - faster than the original synchronous code, but still not parallel. JetBrains AI suggested async inside a coroutineScope with Dispatchers.IO:

private suspend fun loadProductParts(config: ProductConfig) {

val loadResults: List<Deferred<PartLoadResult>> = coroutineScope {

config.parts.map { partConfig ->

async(Dispatchers.IO) {

loadSinglePart(partConfig, config.parts.size)

}

}

}

loadResults.forEach { deferred ->

val result = deferred.await()

}

}

Load time: 800ms to under 200ms.

It also flagged that the LruCache for cubemap textures was missing a sizeOf() override. Without it, the cache tracks entry count, not actual bytes. On TV hardware with 30-40 MB GLBs and limited RAM, that's a crash waiting to happen:

val cubemapCache: LruCache<String, CubemapData> by lazy {

object : LruCache<String, CubemapData>(MAX_CACHE_SIZE_BYTES) {

override fun sizeOf(key: String, value: CubemapData): Int {

return value.sizeInBytes() // actual bytes, not count

}

override fun entryRemoved(

evicted: Boolean, key: String,

oldValue: CubemapData, newValue: CubemapData?

) {

if (evicted && oldValue.buffer.isDirect) {

System.gc()

}

}

}

}

And it caught a Dispatchers.Main call being used for file I/O - something neither Claude Code nor ChatGPT had flagged in the code they'd helped write. This is not general reasoning. This is baked-in knowledge of Android SDK constraints. That's the niche.

ChatGPT: When It's Actually a Math Problem

The rotation center problem was the clearest case. Two others sealed the pattern.

Cubemap memory. Textures were consuming 24 MB each on load. ChatGPT's fix: calculate the optimal inSampleSize as the largest power-of-2 factor that keeps both dimensions above the required size - powers of 2 align with how GPUs upload textures, so the reduction is lossless for rendering purposes.

private fun calculateInSampleSize(

options: BitmapFactory.Options,

reqWidth: Int,

reqHeight: Int

): Int {

val (height: Int, width: Int) = options.run { outHeight to outWidth }

var inSampleSize = 1

if (height > reqHeight || width > reqWidth) {

val halfHeight: Int = height / 2

val halfWidth: Int = width / 2

while (halfHeight / inSampleSize >= reqHeight &&

halfWidth / inSampleSize >= reqWidth) {

inSampleSize *= 2

}

}

return inSampleSize

}

Texture memory: 24 MB to 6 MB per cubemap.

Camera FOV. Calculating camera distance from a scene bounding box requires using horizontal FOV for a landscape TV aspect ratio, not vertical. ChatGPT got the first implementation wrong (used vertical FOV), but identified and corrected it on the next exchange once the visual symptom was described. That's still two exchanges to a correct answer - not one. Mathematical reasoning is a strength, not a guarantee.

What I Got Wrong

The timeline is real. The friction was also real.

Wrong tool attempts cost time. Spent 20+ minutes on the rotation center problem with Claude Code before switching. No heuristic existed upfront for "hybrid" problems. Some required trial and error before the right tool became obvious. The recognition pattern - "this is a math problem, not an architecture problem" - gets faster with practice but isn't instant.

ChatGPT wasn't always right on first attempt. Camera FOV was wrong initially. Rotation center took three exchanges. Mathematical reasoning is a strength, not a guarantee.

Automation had real blindspots. Animation timing issues, button placement, corrupted GLB handling - all found in user testing, not monitoring. Technical delivery wasn't the same as zero issues.

The strategy worked because the right-tool wins compounded. Wrong-tool costs were bounded - usually 20-30 minutes before switching. The ratio held.

The One Thing That Transfers

The mapping this project produced:

- Coordinate math, bitmap sampling, geometric transforms - ChatGPT

- State machines, caching strategies, data architecture - Claude Code

- Kotlin coroutines, Android memory management, SDK gotchas - JetBrains AI

That map came from failures, not planning. Your failures on your stack will produce a different one.

The practice that transfers: when a tool isn't converging after two or three rounds, the problem is probably domain mismatch, not prompt quality. Switch. The 20 minutes you spend grinding with the wrong tool are almost always longer than the three exchanges it takes the right one.

Right horse for the right course. The trick is knowing which problem is which kind of race - and being willing to swap mid-stride when you've called it wrong.