SkillBlueprint: A Self-Enforcing React Native Audit System

TL;DR

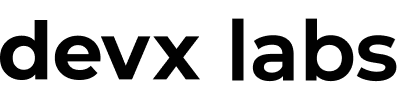

- Every React Native screen passes through a 5-phase workflow before it can be committed

- The audit runs 8 layers, entirely offline:

- Error handling

- Security

- Performance and real-device guard

- Responsive layout

- Navigation types

- TypeScript

- Learned rules

- Test coverage intelligence

- Only 2 of the 5 phases use AI tokens; the rest are deterministic and local

- A screen fails commit if any required audit layer fails or test coverage drops below 80%

- Every production bug gets encoded as an enforced rule -- the system gets harder to break over time, not easier

The problem with "we'll catch it in review"

PR review was our only enforcement layer. And it was slow, inconsistent, and human.

The same issues kept appearing across screens written by different engineers: tokens stored in AsyncStorage instead of EncryptedStorage, ScrollView wrapping flat lists with hundreds of items, useEffect triggering API calls on every render, Dimensions.get hardcoded into layout math. Each one caught in review. Each one appearing again two weeks later in a different file.

The root problem wasn't carelessness -- it was that these rules lived in heads and Notion docs, not in the tools engineers actually ran. We needed enforcement that fired before the PR was even opened.

How the workflow runs

SkillBlueprint is a five-phase pipeline. You describe what you want to build, and it produces committed, tested, audited code.

You say: "make login screen"

→ Phase 1: Reuse Check (offline)

→ Phase 2: Code Generation (AI)

→ Phase 3: Audit (offline)

→ Phase 4: Test Generation (AI)

→ Phase 5: Run Tests (offline)

Phases 2 and 4 call the AI. Phases 1, 3, and 5 are fully offline -- no tokens, no latency, no cost.

Phase 1: Check what already exists

Before generating a single line, the system scans src/components/, src/hooks/, src/store/, and src/screens/ for implementations that match what's being requested.

This kills the most common form of codebase bloat: reimplementing what already exists. If useAuth already handles the token flow, code generation reuses it. If LoadingSpinner exists, it won't be recreated inline as a local component. Duplication is caught before it happens, not after.

Phase 2: Code generation with non-negotiable rules

Code generation runs against a strict ruleset. Not suggestions -- enforced rules. Any generated code that violates them fails the audit in Phase 3.

@/ path aliases only no ../../../

Props interface at top of file

StyleSheet.create at bottom no inline styles

FlashList for all lists no ScrollView, no FlatList

FastImage for network images

EncryptedStorage for tokens AsyncStorage banned for auth data

useFocusEffect for API calls useEffect banned for data fetching

Zustand slice: loading + error both required, not optional

testID on every interactive element

The rules exist because each one maps to a real incident -- a token leaked through AsyncStorage, a list that janked on budget Android, a crash that only reproduced on navigation focus. The audit layer is what gives these rules teeth.

Phase 3: The audit

npm run audit

Eight layers run in sequence. A hard FAIL on any layer blocks the commit.

Layer 1: Error handling

Scans for missing try/catch, empty catch blocks that silently swallow errors, and screens that fetch data without surfacing error or loading state to the UI. A screen that can fail without telling the user it failed doesn't pass.

Layer 2: Security

Flags AsyncStorage usage for any token or credential storage, hardcoded URLs, hardcoded API keys, and sensitive data passed through navigation params. Navigation params show up in crash reporters and navigation logs -- they're not a safe place for auth tokens or user IDs.

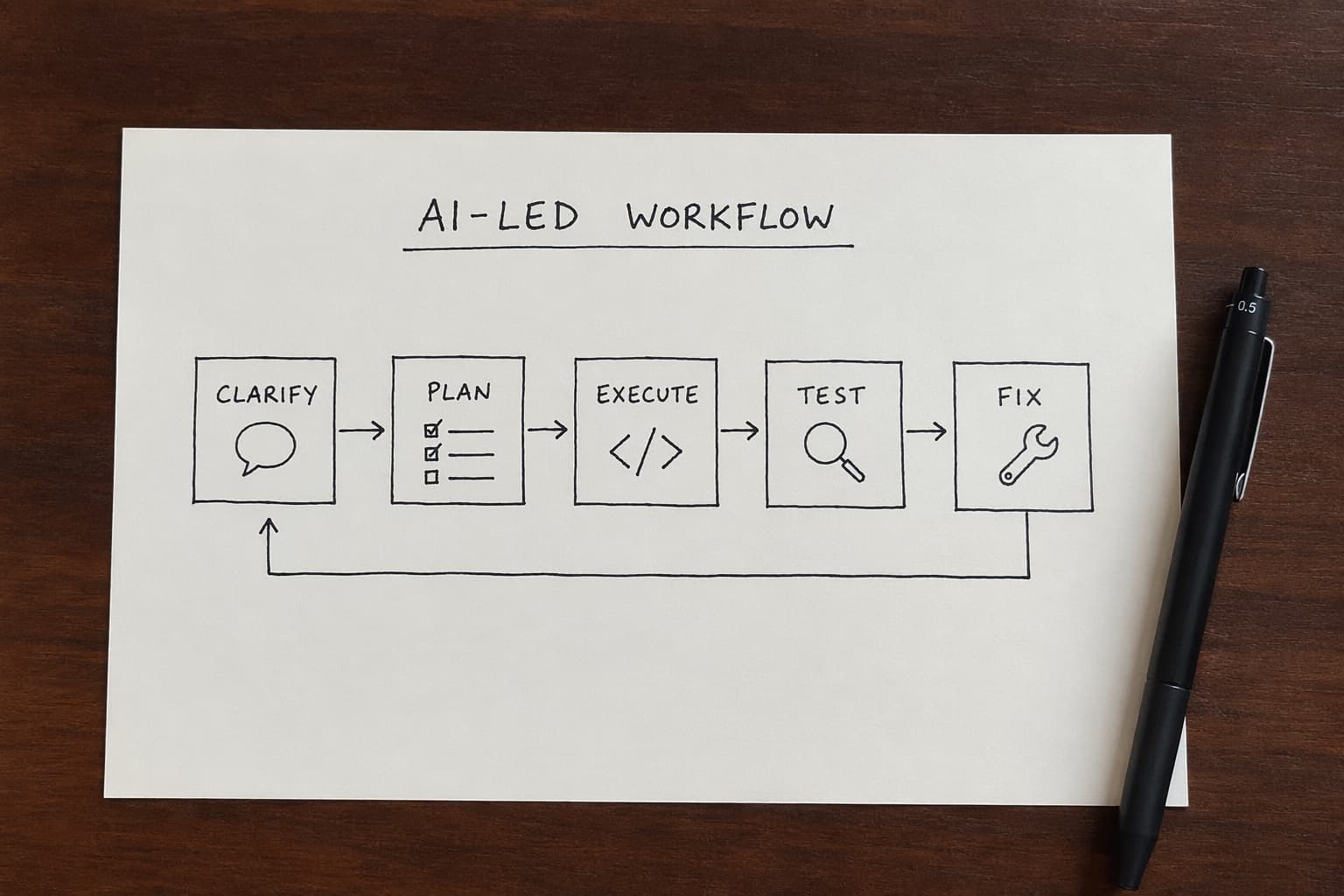

Layer 3: Performance and real-device guard

Static analysis catches the patterns that degrade performance on real hardware:

ScrollViewwrapping lists -- virtualization off, full render on mountAnimatedfromreact-native-- runs on the JS thread, causes jank; usereact-native-reanimated(v4)Dimensions.getfor layout sizing -- breaks on orientation change and foldablesuseEffectfor API calls -- fires on every dependency change, not on screen focus

Beyond static checks, each screen gets a real-device performance guard. Ten code signals are mapped to three guards:

FPS Guard → PASS / FAIL

Memory Guard → PASS / FAIL

Render Guard → PASS / FAIL

Any FAIL blocks the merge. The signals are derived from patterns with known performance regressions on low-end Android hardware -- the kind of devices most of our users are on.

Layer 4: Responsive layout

Catches hardcoded pixel dimensions that break on non-standard screen sizes, missing SafeAreaView on screens with content near the edges, incorrect Platform branching, and direct usage of Image from core React Native instead of FastImage.

Layer 5: Navigation types

Every navigation.navigate() call must be typed against the route params. Every useNavigation() call must carry its route type. Untyped navigation compiles but produces silent runtime errors when params are wrong -- the audit treats it as a hard failure.

Layer 6: TypeScript

Runs tsc --noEmit and surfaces every type error before it reaches CI. If TypeScript fails, the audit fails. This is the last line of defence before the code leaves the machine.

Layer 7: Learned rules

This layer reads from knowledge/learned-rules.json -- a file that grows every time a bug makes it through everything else and reaches production.

Bug reaches production

→ Root cause identified

→ Rule added to learned-rules.json

→ Audit enforces it on every future file

→ That bug class never ships again

This is where institutional knowledge actually lives. Not in a Confluence doc nobody opens, not in a Slack thread that scrolls away -- in a file that runs on every commit. A developer joining the team on day one gets every rule learned over the past year, automatically enforced.

Layer 8: Test coverage intelligence

Every screen gets a coverage scenario table before tests are generated:

| Scenario | Status |

|---|---|

| Renders correctly | ✅ Covered |

| Shows loading state | ✅ Covered |

| Handles API error | ✅ Covered |

| Empty state | ❌ Missing |

| User interaction | ✅ Covered |

| Navigation on success | ✅ Covered |

| Storage read/write | N/A -- screen has no storage |

| Accessibility | ❌ Missing |

Coverage percentage is calculated from this table. Below 80% is a warning. A required scenario marked missing with no documented reason is a hard FAIL -- the commit is blocked until it's covered or explicitly marked N/A with a reason.

Auto-fix mode

npm run audit -- src/screens/LoginScreen.tsx --fix

For structural issues -- inline styles, missing testIDs, AsyncStorage replacements -- the audit rewrites the file directly and reports what changed. Anything it can't safely auto-fix is flagged with the exact file location and the rule it violated. The fix mode is intentionally conservative: we'd rather surface an issue than silently rewrite logic that has side effects we didn't model.

Phase 4 and 5: Test generation and execution

npm run testgen

Generates a full .test.tsx file with 15-20 test cases derived from the scenario table in Layer 8. Tests cover rendering, loading state, error state, user interactions, API flows, and storage behaviour.

npm test

Runs the suite against real component logic. A screen that failed the audit in Phase 3 doesn't get here -- tests for broken code don't run until the code is clean.

What actually changed

Before: every other PR had the same categories of issues. Token storage in the wrong place. Performance antipatterns on list screens. Untyped navigation calls. No test coverage. Each one depended on a reviewer remembering to look.

After: those issues are caught before the file is committed. By the time a PR is opened, the code has passed 8 audit layers, has a generated test suite, and has been type-checked. Review time dropped. Regressions in those categories dropped to zero.

The more important change: every bug that gets through now makes the system stronger. The rules compound. The longer the system runs, the harder it becomes to ship bad code by accident.

What it doesn't solve

The real-device performance guard is based on code signal analysis, not actual profiling. A screen can pass all three guards and still have a performance problem that only surfaces under real network conditions or with a large dataset loaded. We treat the guard as a floor -- necessary, but not sufficient. Real device testing on the low-end hardware we target is still required for anything performance-sensitive.

Learned rules are only as good as how they're written. A rule that's too broad blocks valid patterns. A rule that's too narrow misses the actual root cause. Every new rule addition goes through a review before it's committed to learned-rules.json -- the same care that goes into code goes into the rules that govern it.