For the last few years, most discussions around AI have revolved around models.

Which model is better?

Which model has a larger context window?

Which model performs best on benchmarks?

But once you start building real AI systems, a different reality becomes clear.

The difference between an AI system that feels generic and one that feels intelligent rarely comes down to the model alone.

It comes down to context.

Models generate answers.

Context determines whether those answers are meaningful.

The Early Focus: Prompt Engineering

When large language models first became widely accessible, the primary focus for developers was prompt engineering.

Developers experimented with prompts like:

Explain this code

Provide step-by-step reasoning

Be concise

Sometimes this improved responses.

But very quickly it became clear that changing instructions only helps so much.

If the model lacks key information about the system, the codebase, or the problem being solved, the output will still be limited. The model fills in gaps by making assumptions.

This leads to responses that sound correct but lack real grounding.

The deeper issue is simple:

Language models can only reason over the information present in their context window.

No prompt can compensate for missing knowledge.

The Real Shift: From Prompts to Context

Modern AI systems are no longer single prompts sent to a model.

They are systems that assemble context before the model runs.

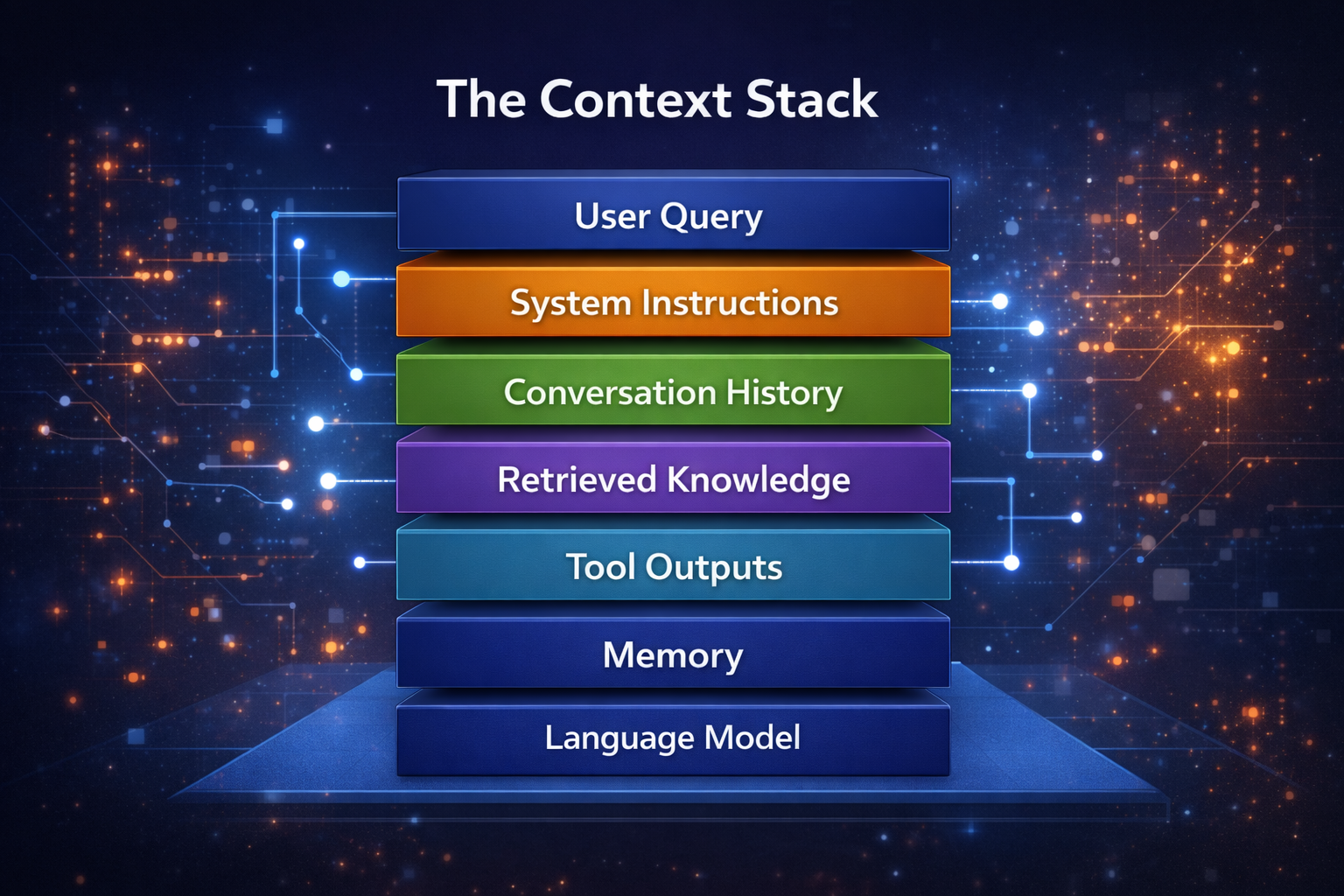

This includes information such as:

- system instructions

- conversation history

- documentation

- retrieved knowledge

- structured data

- tool outputs

- long-term memory

The role of the developer shifts from writing prompts to designing the information environment the model operates within.

This discipline is what we can call context engineering.

What Is Context Engineering?

Context engineering is the process of selecting, structuring, and delivering relevant information to an AI system at the moment it performs reasoning.

The goal is not to provide more information, but to provide the right information in the right structure.

In practical systems, context might include:

- product documentation

- codebase files

- API schemas

- knowledge base entries

- database records

- prior conversation summaries

- tool responses

The model uses this combined information to produce an answer.

When this context is well designed, the AI system becomes significantly more capable.

The Context Stack

In modern AI applications, context is not a single piece of text. It is a layered stack of information.

Each layer adds a different type of information that helps the model reason about the problem.

The effectiveness of the system depends on how these layers are constructed and ordered.

The Challenge: Context Is Hard

While adding context sounds straightforward, it introduces several engineering challenges.

Information Overload

Providing too much information can degrade performance.

Large language models have finite attention capacity. When large volumes of irrelevant data are included, the model struggles to identify which parts matter.

Effective context engineering prioritizes signal over noise.

Context Placement

Models often give more attention to information at the beginning and end of prompts.

Important information buried in the middle of large context windows can be overlooked.

This means context must be structured deliberately, not simply appended.

Context Drift

In long-running conversations, context accumulates over time.

Without careful management, this leads to:

- outdated instructions

- irrelevant history

- conflicting information

Effective systems periodically summarize, prune, and refresh context to maintain clarity.

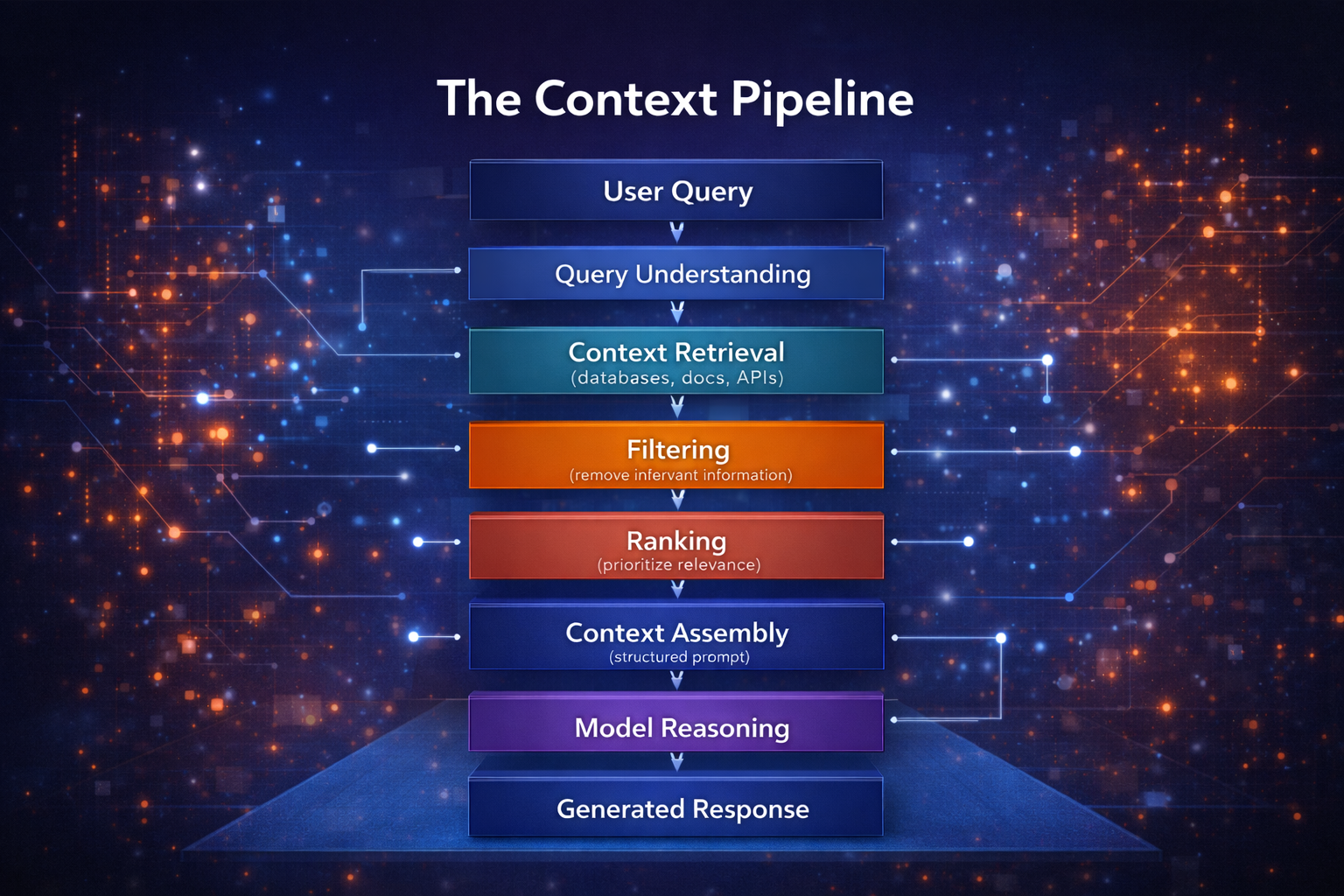

The Context Pipeline

In production AI systems, context is rarely assembled manually. Instead, it is constructed through a structured pipeline.

Each step reduces noise and improves the relevance of the information the model receives.

Retrieval-Augmented Generation

One of the most widely used context engineering patterns is retrieval-augmented generation (RAG).

Instead of relying solely on training data, the system retrieves relevant information from external sources at runtime.

These sources might include:

- documentation repositories

- databases

- knowledge bases

- internal APIs

- code repositories

The retrieved data becomes part of the context the model uses when generating responses.

This approach has two major advantages:

- Responses remain grounded in real data

- Knowledge stays up to date without retraining the model

Context in AI Development Tools

Context engineering becomes especially powerful in developer tools.

For an AI system to understand and reason about code, it must see more than a single file.

Effective systems provide context such as:

- repository structure

- related modules

- dependency relationships

- code conventions

- documentation

- relevant commit history

Without this information, the model can only give general suggestions.

With proper context, it can generate solutions aligned with the architecture of the project.

This is why modern coding assistants rely heavily on code indexing, retrieval systems, and structured context pipelines.

Context vs Prompt Engineering

The difference between prompt engineering and context engineering is structural.

| Prompt Engineering | Context Engineering |

|---|---|

| Focus on writing prompts | Focus on building context systems |

| Single interaction | Multi-stage pipelines |

| Manual experimentation | Structured architecture |

| Limited scalability | Designed for production systems |

Prompt engineering focuses on how we ask questions.

Context engineering focuses on what information the system has when answering.

The Evolution of AI Systems

AI systems are evolving rapidly.

Prompt-Based Tools

↓

Context-Aware Assistants

↓

AI Agents

Agents require significantly more capabilities than simple prompt systems.

They must:

- access external tools

- maintain memory

- reason across multiple steps

- operate within environments

All of these capabilities rely heavily on context management.

In many ways, context becomes the operating system of an AI agent.

Why Context Engineering Will Matter Even More

Model capabilities are improving quickly, and access to powerful models is becoming increasingly widespread.

As this happens, the true differentiator will shift from which model you use to how well you design the systems around it.

The most capable AI applications will be those that excel at:

- retrieving relevant information

- managing memory

- integrating tools

- structuring context

- orchestrating reasoning

These are all problems of context architecture.

Final Thoughts

Large language models are powerful reasoning engines.

But they operate entirely within the boundaries of the context they receive.

If the context is incomplete, the answers will be generic.

If the context is noisy, the reasoning becomes unreliable.

But when context is carefully designed, the same model can produce outputs that feel dramatically more intelligent.

The key insight is simple:

Models generate answers. Context creates intelligence.

As AI systems evolve from simple chat interfaces into assistants and autonomous agents, context engineering will become a foundational discipline in AI development.

The future of AI will not just be about better models.

It will be about building better systems around them.